Fine Tune AI Models from Google LLM Family Using Vertex and Python

Fine tuning is a powerful weapon to specialize an AI model on top of a pre-trained AI model. Compared to prompting, it enables you to further increase output accuracy while it costs much less compared to developing a new AI model from scratch.

In this article, we would walk through in details how to fine tune AI models from Google LLM family using Vertex and Python. Let’s go!

Tables of Content: Google AI Model Fine Tuning Using Python

- What is AI Model Fine Tuning

- Data Preparation for Google AI Model Tuning

- Tuning Model Creation

- Tuning Pending and How to Call Using Python

- Google AI Model Tuning Cost

- Wrap up

What is AI Model Fine Tuning

AI model fine-tuning is the process of customizing output and increasing accuracy on top of a pre-trained AI model using your own datasets. The aim of fine-tuning is to maintain the original AI capabilities of a pretrained AI model while adapting it to fit in more specialized use cases. Building on top of an existing sophisticated model through fine-tuning enables machine learning developers to create effective models for specific use cases more efficiently. This approach is especially beneficial when computational resources are limited or relevant data is scarce, because it’s unnecessary to create a pre-trained model from scratch.

For example, we assume that you are a blogger and social media content creator, you like to fine tune the model and enable it to reply to the comments with your stylish tone of voice. Furthermore, writing the blog articles with your unique tone of voice value propositions. In this case, fine tuning is an option for you to deploy and automate the tasks by integrating it with your workflow

Data Preparation for Google AI Model Tuning

Using tuning from Vertex AI language section, it requires developers to format the JSON data using these two keys, which are input_text and output_text. Developers can’t change the key names with others.

Input_text is for you to add the prompt, context or samples of content generated by AI purely. Conversely, output_text is a place to nurture AI what answers you are expecting if the input_text is like your prompting. Take the blogger and social content creator for instance, you can add your full piece of post, article or other content format samples in output_text part.

Once the dataset is ready, we need to convert it into JSON lines as this is the format required by Google Vertext AI Language tuning. The sample by using Pandas dataframe is as follows:

df2.to_json('filename.jsonl', orient='records', lines=True)

pdRead = pd.read_json('yourfilepath.jsonl', lines=True)

Tuning Model Creation

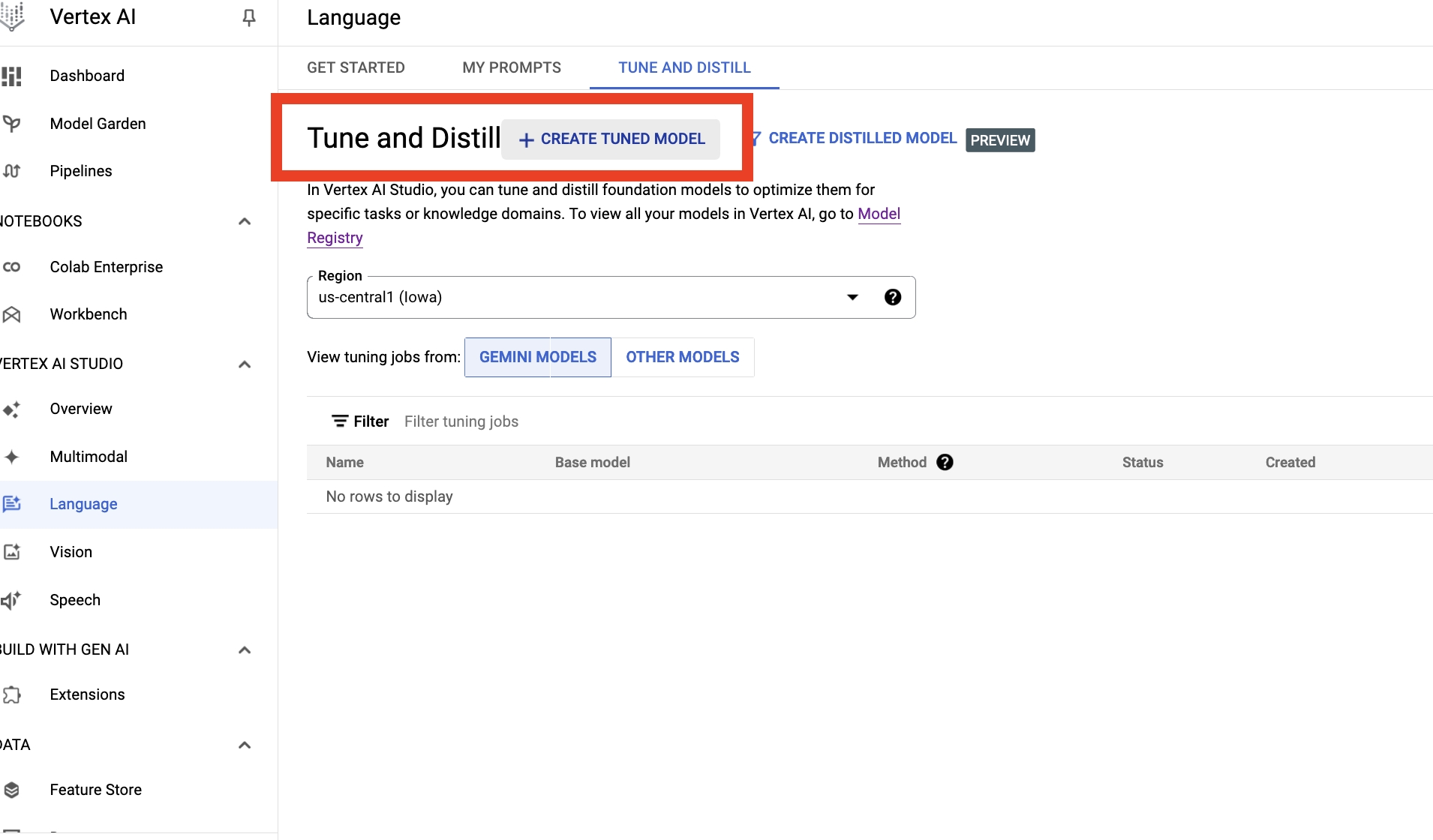

When all datasets are ready, now it’s time to go to Google Cloud Vertex. We can create a Google cloud account and go to the Vertex Studio and select the language section and click to create a tune and distill task as the following image

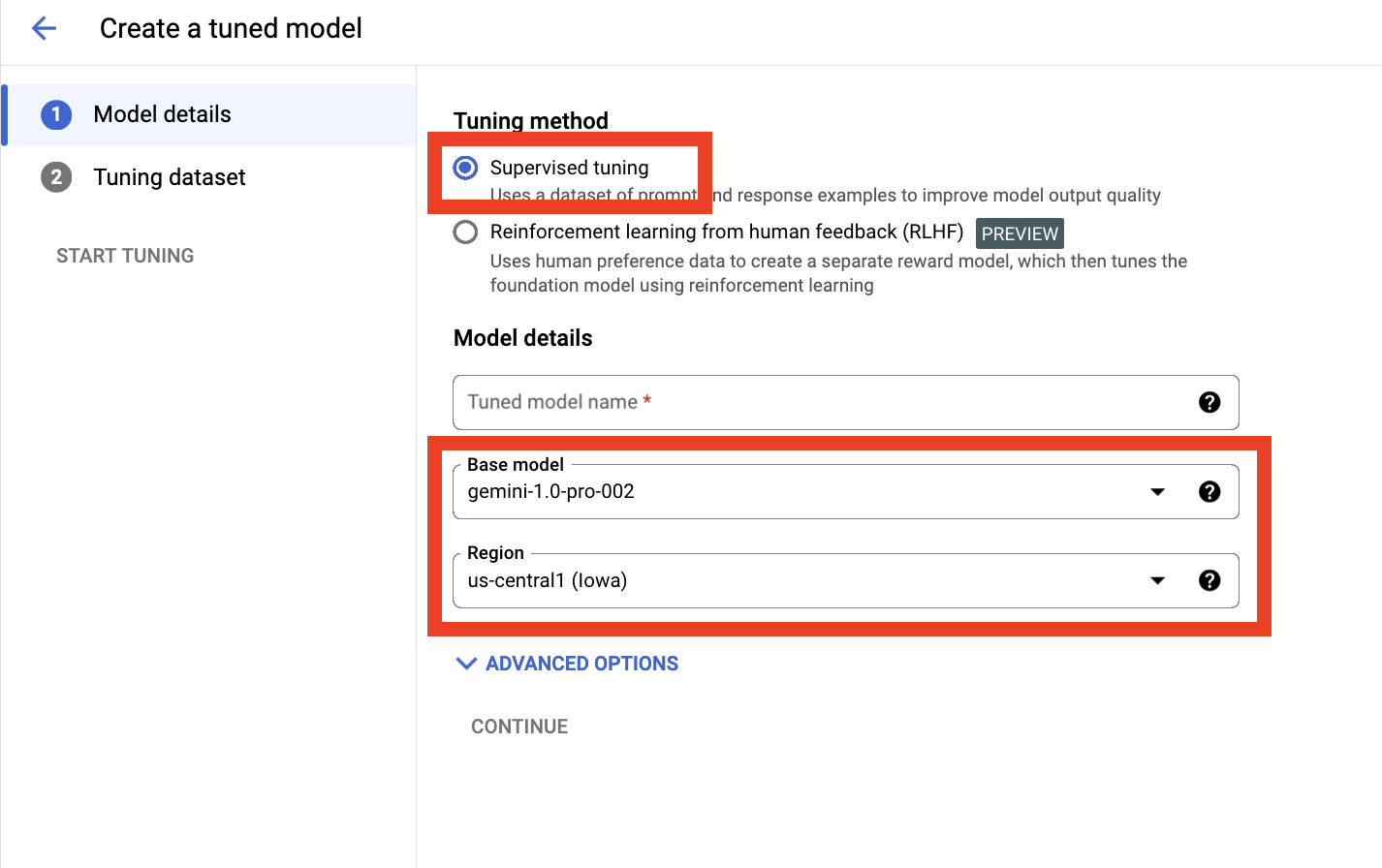

Then we need to select more such as method, model and region. Here are the supervised model, Gemini and us-central as follows:

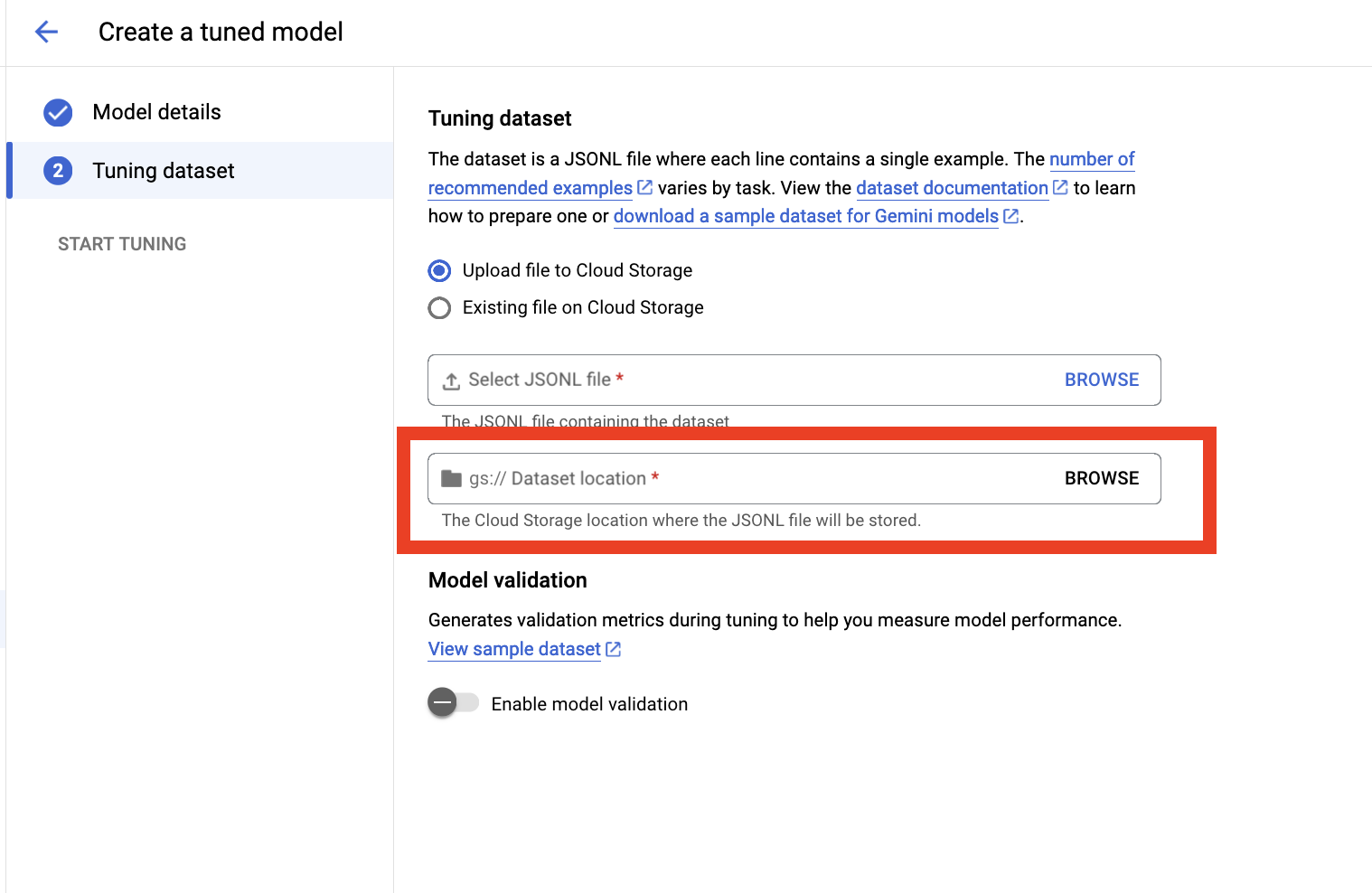

Last but not least, we are required to upload the prepared JSON line dataset to Google Cloud storage and select it here

Tuning Pending and How to Call Using Python

During the process of the model tuning, we can go to pipline and check the status or go back to the language section to check the status. The duration very depends on the dataset you are using to tune the pre-trained model. For my case, it takes around 5 hours to finish

Once the tuning complete, we can go to the Model garden and select the model we used to tune using the owned dataset instantly. Or you can use Python to test as well. Below is the script sample

def tunedModel(self, prompt, characters):

parameters = {

"max_output_tokens": int(characters),

"temperature": 0.9,

"top_p": 1

}

model = TextGenerationModel.from_pretrained("the AI model version")

model222 = model.get_tuned_model("projects/project ID/locations/region name/models/tuned model ID")

response = model222.predict(

prompt,

**parameters

)

return response.text

Google AI Model Tuning Cost

For more details regarding the Vertext tuning pricing, we suggest you go to Google it and check their updated content on the official web page. In terms of the cost we used to tune the model for the purpose of content creation. We spend an average 100 USD per time. Total amount of EN characters is 350,000 each time and it takes approximately 5 hours. Hope these figures can give you a reference regarding the cost using Google AI tuning

Compared to OpenAI or Azure AI, spending are similar although sometimes the latters might charge less. It varies case by case.

Wrap-up

Fine tuning is a powerful weapon for you to get a specialized, niche-purpose and comparable lower cost and cutting-edge AI model. It saves cost and doesn’t require you to invest to develop from scratch.

"